AI Factories:

NVIDIA GTC was all about Energy, Data Centers & Infrastructure Applications

Short note this week as I spent all of it in and around NVIDIA GTC 2026.

This Nvidia GTC was different than the previous ones, at least that’s what many people told me on the floor of the conference. The focus on energy and infrastructure was not just visible but front and center this time around.

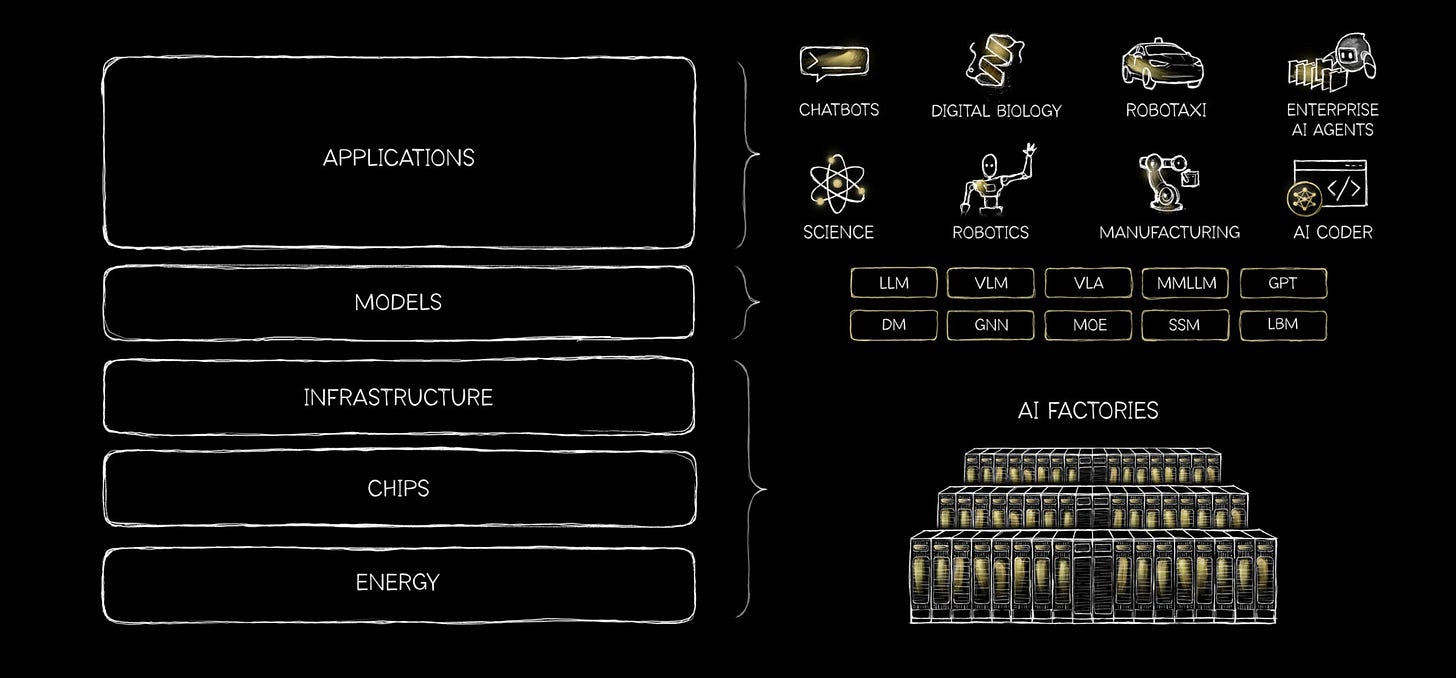

The expo halls were littered with the latest in datacenter technologies (mostly centered around the NVIDIA chip - Vera Rubin. The majority of the folks with booths were in the AI factory bucket in some way shape or form. Even many of the AI applications were focused on this layer. (reference the five layer cake below)

This didn’t necessarily feel like a hardware conference but a heavy industry conference. In retrospect, that’s likely what I should have expected.

Once that heavy industry thought showed up, it didn’t really leave. I kept noticing it in small ways. Someone talking about "gigawatt scale" as though it is the next obvious step. A booth looping visuals of Bulk infrastructure buildouts instead of dashboards. Over at Panthalassa, there was this quiet confidence around bringing gigawatts online in a short time. I remember standing there thinking that sounds incredibly aggressive, but also, no one here seems to be questioning the direction. They are only questioning the pace. There was an energy in the room that felt different than usual.

AI is solving its own problems

This year, I also saw a ton of companies focused on physics engines, CFD, AI aided simulations & most importantly focused on extracting the most out of the energy stack.

A couple that stood out HammerheadAI & Phaidra. Both are focused on the same thing broadly, how to unlock the stranded power at a data center. Rahul Kar, CEO at Hammerhead, has extensive expertise in this area. He mentioned how Hammerhead had gone all in on inference data centers being the big bet of the future. The way he explained it to me made a lot of sense. In inference, there is something called a KVCache, basically a way for an inference to be less compute heavy in the future; but as this grows; inefficiencies begin and GPUs start sitting idle. IF you can optimize this cache, AND you have control over the token and the compute scheduling; you can effectively unlock the idle compute sitting inside a power-constrained data center and convert stranded megawatts into billable tokens.

Phaidra is approaching a similar problem from the cooling and facility operations side: using reinforcement learning to run data center HVAC and power systems more efficiently. Different entry point, same underlying insight. The energy stack is the next frontier for optimization, and AI is increasingly the tool being used to do it.

Which brought me to the thought I kept returning to on the floor: AI is beginning to solve its own infrastructure problems. The same capabilities being deployed for enterprise applications are being turned inward, on the factories building those capabilities. That loop, physics simulation, CFD, real-time power orchestration, applied to the AI supply chain itself, is probably underappreciated right now.

GTC used to feel like a product launch with a conference attached. This year it felt like an industry convening. Heavy industry, with long time horizons and gigawatt ambitions. Whether or not all of it materializes at the pace being discussed, the direction is not really in question anymore. Only the pace.

Some special mentions I thought were super interesting

Firmus - An Australian neocloud co-designing compute, cooling, and grid signals into a single architecture. Targeting 1.6GW of renewable-powered AI factories by 2028. NVIDIA-backed and moving fast.

Yotta - India's sovereign compute play. 20,000 Blackwell Ultra GPUs today, scaling to 80,000 by FY27. The subtext was clear: India is done being a consumer of compute.

Utilidata - Started in grid optimization, now bringing that same intelligence inside the data center. Their argument: a properly orchestrated 100MW connection should realistically support 130MW of AI compute.

Crusoe - This one surprised me because I hadn't realized they had moved to become a neocloud from a neo energy player only. They divested bitcoin mining, raised over $1B, and launched managed inference built around proprietary KV cache optimization. The energy DNA is still there; the ambition is now full-stack.

I’ll leave you with one scene from the floor today (yes there were many robots there and so much more; I just didn’t have time to cover it all in one post!)